Headline: NVIDIA Launches AIConfigurator to Optimize Disaggregated LLM Serving

Key Facts

- What: NVIDIA introduced AIConfigurator, a tool within the Dynamo AI inference platform that automatically recommends optimal configurations for disaggregated LLM serving.

- Why: It addresses the complex multi-dimensional search space of hardware, parallelism, and prefill/decode splits that makes manual optimization impractical.

- How: The tool eliminates guesswork by analyzing workload requirements and suggesting high-performance, cost-effective setups for production LLM inference.

- Context: Builds on the growing industry adoption of disaggregated inference, pioneered by systems like DistServe, which split prefill and decode phases across independent compute pools.

Lead paragraph

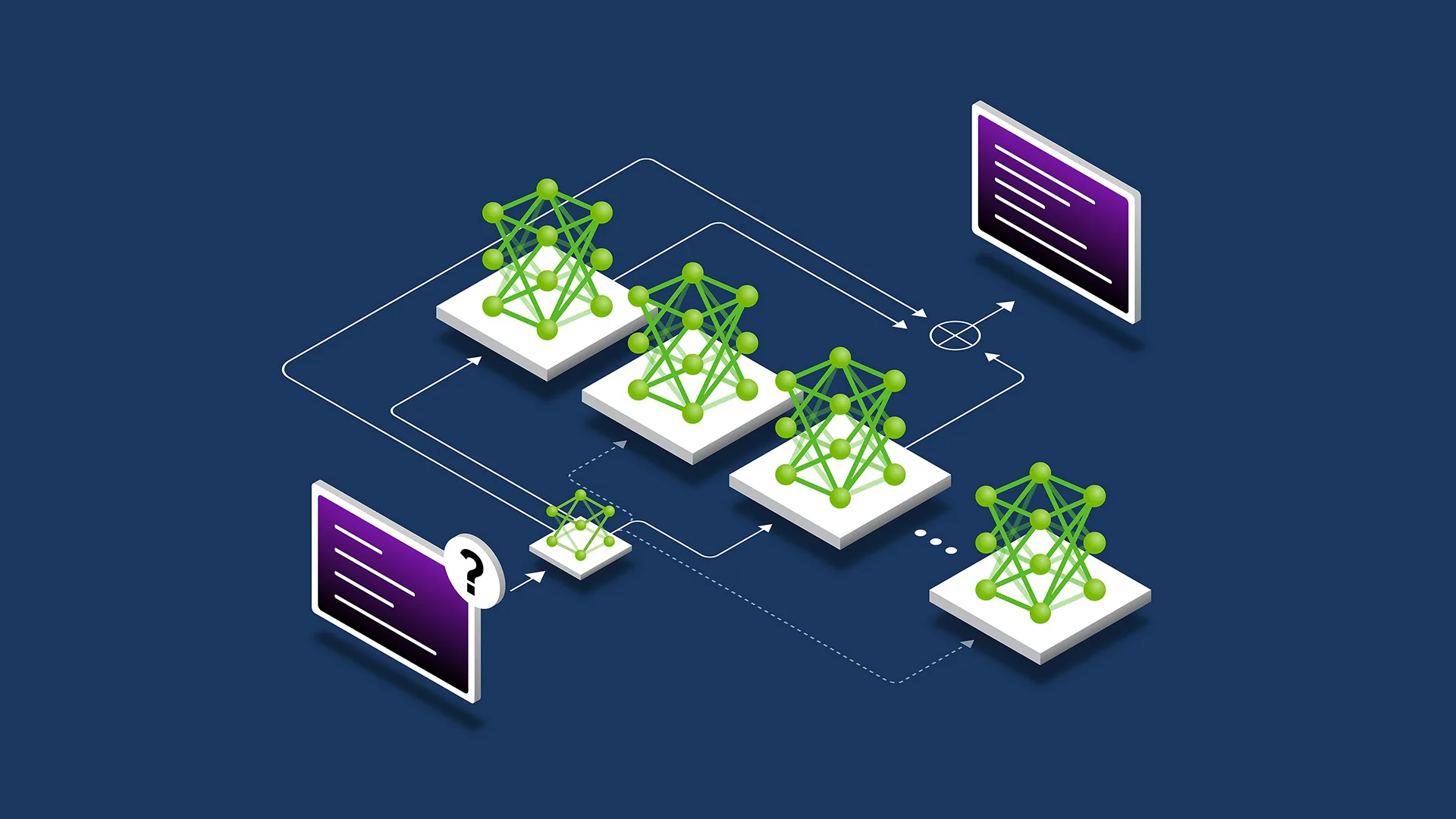

NVIDIA has released AIConfigurator, a new component of its Dynamo AI platform designed to remove the complexity from deploying and optimizing large language models (LLMs) for high-performance, cost-effective serving. The tool tackles a core challenge in modern AI inference: the massive, multi-dimensional configuration space involving hardware selection, parallelism strategies, and the split between prefill and decode phases. According to NVIDIA’s official developer blog, AIConfigurator recommends optimal configurations tailored to specific workloads, helping organizations avoid time-consuming manual experimentation and exhaustive testing.

Body

Deploying LLMs at scale has become an increasingly difficult engineering task. The ideal setup for any given workload — whether measured by throughput, latency, or cost — exists within a vast search space that includes choices around GPU types, tensor parallelism, pipeline parallelism, and how to disaggregate prefill (prompt processing) and decode (token generation) stages. Manually exploring this space or running comprehensive benchmarks is practically impossible for most teams.

NVIDIA’s AIConfigurator aims to solve this by acting as an intelligent recommendation engine. As detailed in the company’s blog post titled “Removing the Guesswork from Disaggregated Serving,” the tool analyzes workload characteristics and suggests configurations that balance performance and efficiency. It is integrated into NVIDIA Dynamo, the company’s broader platform for scalable AI inference that supports disaggregated serving architectures.

The concept of disaggregated LLM serving has gained significant traction in the industry over the past 18 months. Early research from UCSD’s Hao AI Lab introduced DistServe, which proposed splitting LLM inference into separate prefill and decode phases running on independent compute pools. This approach allows each phase to scale independently, improving overall resource utilization and throughput. Today, major production frameworks including NVIDIA Dynamo have adopted similar disaggregated designs, according to updates from the Hao AI Lab.

NVIDIA’s new tool builds directly on this foundation. By automating configuration recommendations, AIConfigurator helps practitioners implement disaggregated serving without requiring deep expertise in systems-level optimization. The blog emphasizes that the ideal configuration for any workload is difficult to discover through traditional methods, making an automated approach particularly valuable for enterprises running LLMs in production.

Impact

The introduction of AIConfigurator has several important implications for the AI industry. For developers and ML engineers, it significantly reduces the barrier to deploying efficient LLM serving systems. Instead of spending weeks tuning parameters across GPU clusters, teams can leverage NVIDIA’s recommendation engine to quickly identify high-performing setups.

This is especially relevant as organizations scale from experimentation to production. Disaggregated serving architectures can deliver substantial improvements in throughput and latency compared to traditional monolithic inference deployments. Research highlighted in the broader ecosystem, such as HexGen-2, has demonstrated up to 2.0× throughput gains and 1.5× latency reductions in heterogeneous environments when using optimized disaggregated approaches.

For NVIDIA, the launch strengthens its position in the rapidly growing AI inference market. Dynamo and AIConfigurator position the company as a full-stack provider of both hardware (GPUs) and software tools needed to run LLMs efficiently. This is critical as competition intensifies from cloud providers and open-source frameworks that also support disaggregated inference.

Enterprises benefit from better cost efficiency. Optimizing the split between prefill and decode phases can dramatically improve GPU utilization, reducing the number of expensive accelerators needed to serve a given number of users. In an era of high GPU demand and power consumption concerns, such efficiency gains have direct financial and environmental impact.

What's Next

NVIDIA is expected to continue expanding the capabilities of AIConfigurator and the Dynamo platform. While the initial release focuses on configuration recommendations, future iterations may incorporate more sophisticated workload prediction, real-time optimization, and support for an even wider range of model architectures and hardware configurations.

The broader industry trend toward disaggregated and heterogeneous inference is likely to accelerate. Academic and industry research — including systems like Prism for recommendation models and various HexGen variants — shows continued innovation in resource disaggregation techniques. NVIDIA’s tool makes these advanced architectures more accessible to a wider audience beyond elite research teams.

As LLM applications move deeper into production environments across industries, tools that simplify deployment and optimization will become increasingly important. AIConfigurator represents a significant step toward making state-of-the-art inference techniques available to mainstream AI practitioners.

The company has not yet announced specific timelines for additional features, but the developer blog suggests ongoing investment in making LLM serving more predictable and manageable.

Sources

- NVIDIA Developer Blog: Removing the Guesswork from Disaggregated Serving

- Hao AI Lab: Disaggregated Inference: 18 Months Later

- YouTube: How to Optimize AI Serving with NVIDIA Dynamo AIConfigurator

- OpenReview: HexGen-2 Paper

(Word count: 782)