The short version

NVIDIA's AIConfigurator is a smart tool from NVIDIA that automatically figures out the best way to run massive AI language models (like the ones powering ChatGPT) on powerful computers, making them faster and cheaper without engineers having to guess. It tackles the chaos of "disaggregated serving," where different parts of an AI's work are split across separate hardware pools to run more efficiently. For everyday people, this means AI tools you use – from chatbots to image generators – could get quicker responses, lower costs, and work better on everything from cloud services to your own devices down the line.

What happened

Imagine you're trying to bake a huge cake for a party, but your kitchen is tiny. You can't fit the whole mixing bowl, oven, and fridge in one spot, so you split the job: mix batter in one room, bake in another, and chill frosting across the hall. Now, how do you decide the perfect setup for each room's tools, timing, and helpers without wasting time or money? That's the puzzle NVIDIA is solving with their new tool called AIConfigurator, part of their Dynamo system.

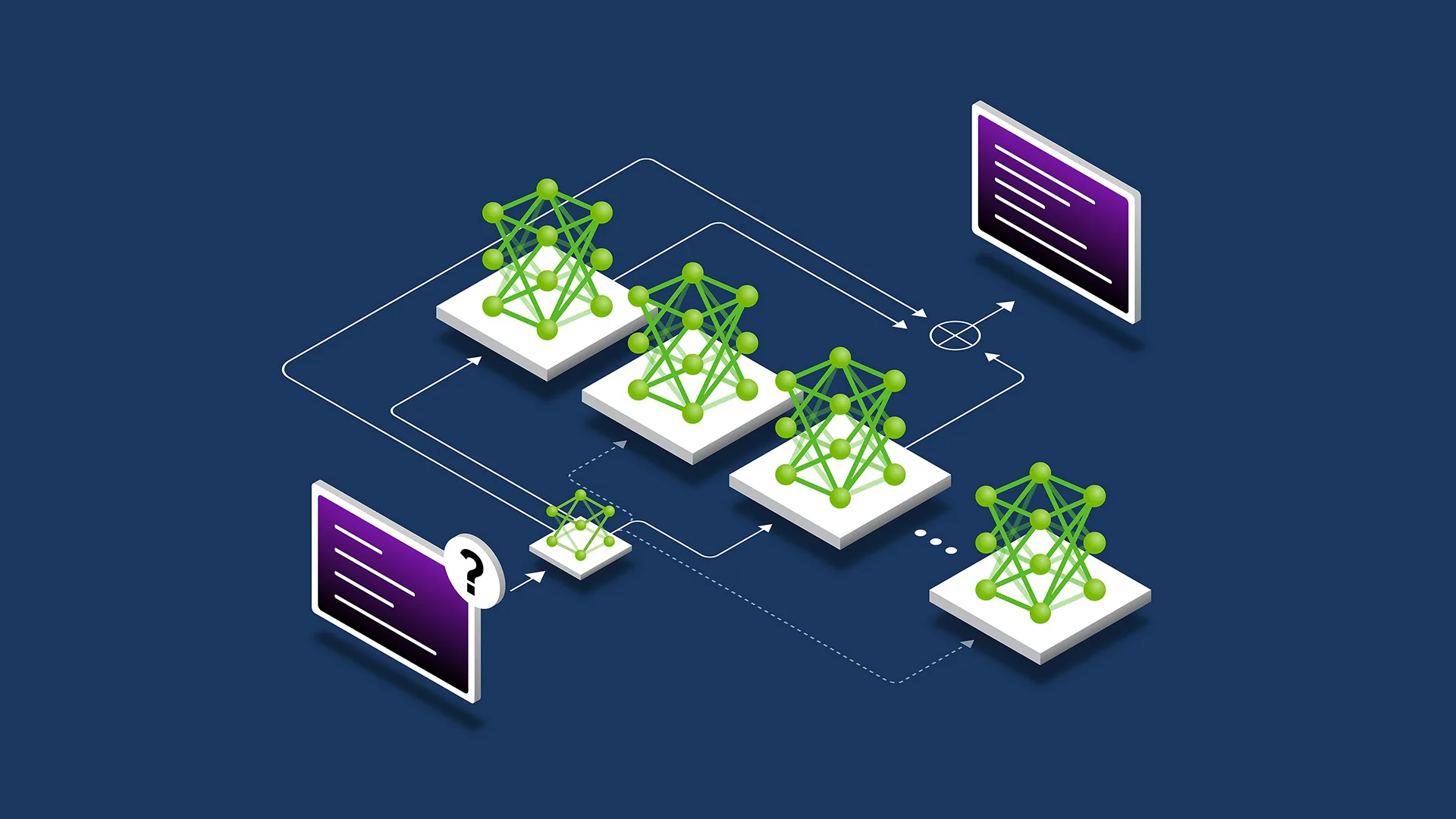

Running big AI models – think of them as super-smart digital brains that generate text, pictures, or code – is like that baking chaos on steroids. These models do two main jobs: first, "prefill" where they read and understand your question (like reading a recipe), and second, "decode" where they spit out answers word by word (like assembling the cake). Normally, engineers have to manually test endless combinations of computer hardware, like how many chips (GPUs), how to split the work, and where to put each part. It's a giant maze of options – too many to check by hand.

Disaggregated serving is the trick of splitting these jobs onto separate groups of computers: one pool for the fast reading part, another for the answering part. This lets companies scale them independently, like having extra ovens for baking but fewer fridges if you don't need them. But picking the right split? Overwhelming. NVIDIA's AIConfigurator acts like an automatic chef: it scans your setup, explores that massive maze using smart math (no exhaustive trial-and-error), and spits out the ideal recipe. The blog calls it "removing the guesswork," and it's designed for high-performance, cost-effective serving of large language models (LLMs).

This builds on industry trends – other papers and tools mentioned in related searches, like DistServe or HexGen-2, also split AI work this way and show speed boosts up to 2x with lower costs. NVIDIA's version is tailored for their hardware, making it easier for companies using their chips.

Why should you care?

You might not tweak AI servers yourself, but you feel the results every day. Slower AI means waiting longer for ChatGPT to reply, higher costs mean apps charge you more, and crashes mean frustration. AIConfigurator makes AI run smoother and cheaper behind the scenes, so services like Google Gemini, Microsoft Copilot, or even free tools get faster without price hikes. It's like upgrading the engine in your car – you don't notice the gears, but your drive to work feels snappier and costs less gas.

For regular folks, this matters because AI is everywhere: writing emails, editing photos, recommending Netflix shows, or helping kids with homework. Better serving means smarter, quicker AI that feels more reliable. Companies save money (up to 30-50% in some related studies), which could trickle down as lower subscription fees or free tiers expanding. Plus, as AI moves to phones and home devices, this tech could make your gadgets handle fancy AI without lagging.

What changes for you

Practically, not much flips overnight – this is for developers and big companies first. But here's the ripple effect:

- Faster apps: Your AI chatbot conversations speed up. No more staring at "typing..." bubbles for ages.

- Cheaper services: Cloud providers using NVIDIA gear (most do) cut costs, so tools like Midjourney or Grammarly might drop prices or add features.

- Smoother experience: Less downtime or errors when AI is slammed, like during viral trends.

- Home AI potential: Tools like the mentioned Llmfit for home servers hint at easier local AI runs – imagine running a private ChatGPT on your PC without headaches.

- Broader access: More efficient AI means smaller companies can afford it, leading to new apps tailored for you, like personalized fitness coaches or budget planners.

If you're a hobbyist tinkering with AI on a home setup, this paves the way for plug-and-play tools that auto-optimize, saving you hours of frustration.

Frequently Asked Questions

### What is disaggregated serving, and why is it a big deal?

Disaggregated serving splits an AI model's work – understanding your input and generating output – across different computer groups, like separate teams for reading and writing. It's a big deal because it lets companies use hardware more efficiently, boosting speed by up to 2x and cutting costs, as seen in tools like NVIDIA Dynamo. For you, it means AI services run faster and cheaper without wasting power.

### Is NVIDIA AIConfigurator free to use?

The source doesn't specify pricing details, so it's not yet confirmed if it's free or paid. It's part of NVIDIA's developer tools, likely available to users of their hardware like through their Dynamo platform. Check NVIDIA's developer site for access – many such tools start free for testing.

### How does this make AI faster for everyday apps like ChatGPT?

It auto-finds the best hardware mix and work-split, avoiding slow manual tweaks. Think of it as a GPS for AI setup: instead of guessing roads, it picks the fastest route. You get quicker replies in apps powered by these optimized models, even if the service doesn't advertise the change.

### Will this work on my phone or home computer?

Right now, it's aimed at big server setups for companies, but the ideas (like smart config tools) inspire home versions, like Llmfit for local servers. It could lead to easier AI on personal devices, making your phone's AI assistant smarter without draining battery.

### When can I expect to see changes in the AI tools I use?

No exact timeline in the source, but as companies adopt it (NVIDIA pushes it now), improvements could hit popular services in months. Watch for faster load times in apps using NVIDIA tech – it's already influencing frameworks like Dynamo.

The bottom line

NVIDIA's AIConfigurator is a game-changer that automates the black art of running huge AI models efficiently by smartly splitting and optimizing hardware use – no more engineer guesswork. For you, the non-tech user, it translates to zippy AI experiences in daily apps, potential savings on subscriptions, and a push toward more accessible home AI. It's not flashy, but it's the kind of behind-the-scenes upgrade that makes tech feel magical instead of frustrating. Keep an eye on your favorite AI tools; they'll thank NVIDIA with better performance soon.