How to Ship Production-Grade Features with Claude Code Review: A Vibe Coder’s Complete Workflow

Why this matters for builders

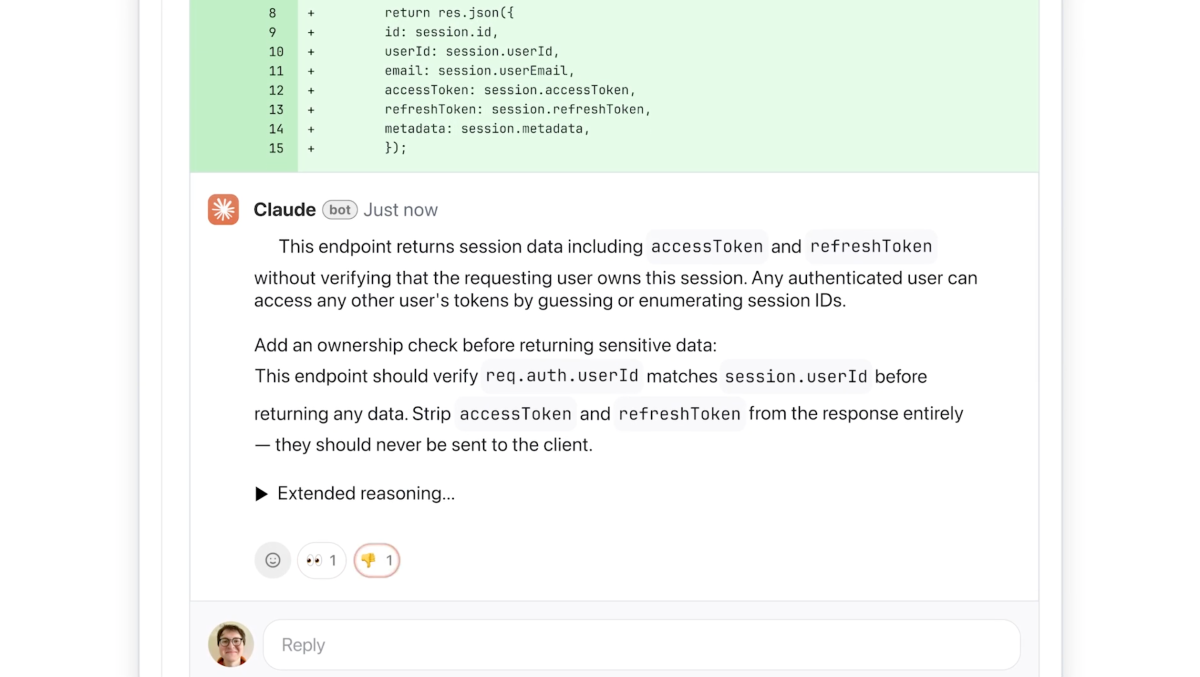

Anthropic’s new Code Review tool lets you automatically surface logical errors, security risks, and architectural issues in AI-generated pull requests using a multi-agent system that runs in parallel, aggregates findings, removes duplicates, and posts color-coded comments directly in GitHub.

The explosion of “vibe coding” — giving plain-language instructions to Claude Code and watching it generate thousands of lines of code — has dramatically increased velocity but also created a review bottleneck. Enterprise teams report pull-request volume exploding while human reviewers drown in low-signal noise. Code Review is Anthropic’s direct answer: an always-on AI reviewer that focuses on logic errors first, explains its reasoning step-by-step, labels severity (red/yellow/purple), and gives immediately actionable fixes.

It ships today in research preview for Claude for Teams and Claude for Enterprise customers. Pricing is token-based; Anthropic estimates $15–$25 per review depending on code complexity. A lighter security scan is included, with deeper analysis available via the recently launched Claude Code Security product.

This changes the game for solo builders and small teams who want to move at AI speed without sacrificing quality. You can now treat Claude as a hyper-productive junior developer and have another senior Claude automatically review its work before it touches main.

When to use it

- You generate >30% of new code with Claude Code or similar tools

- Your team is experiencing PR review fatigue or merge delays

- You want consistent logical-error catching across every pull request

- You need to enforce internal best practices without writing custom linter rules

- You are shipping customer-facing features where bugs have high business impact

- You want a second pair of eyes on security-sensitive changes (light scan included)

The full process — from idea to shipped feature with AI review baked in

1. Define the goal (30 minutes)

Start every feature with a one-paragraph spec that includes success criteria and review expectations.

Starter prompt you can copy to Claude:

You are an experienced staff engineer. I want to build [feature name].

Business goal: [one sentence]

User story: [one sentence]

Technical constraints: [language, framework, existing architecture]

Non-functional requirements:

- Must handle X scale

- Must maintain <200ms p95 latency

- Security: no new attack surface

- Observability: emit structured logs + metrics

Success looks like:

- [measurable outcome]

- [measurable outcome]

After you generate the code, I will run it through Anthropic Code Review. Anticipate the kinds of logical errors it will flag and avoid them proactively.

Force yourself to write acceptance criteria before any code is generated. This single step dramatically reduces the volume of issues Code Review will surface later.

2. Shape the prompt / spec (vibe coding phase)

Good prompting is now half the battle. Use Claude Code to generate the implementation, but feed it the refined spec from step 1.

Recommended pattern:

- First turn: ask for architecture + file plan

- Second turn: ask for implementation of one file at a time

- Third turn: ask for tests + edge cases

- Final turn: ask Claude to self-review using the same logic Code Review will use

Example self-review prompt:

Act as Anthropic’s Code Review agent. Analyze the code I just gave you for logical errors only. For each potential issue:

1. State the suspected bug

2. Explain why it is problematic

3. Suggest the exact fix

Label severity: red (must fix), yellow (should review), purple (related to existing code smell).

3. Scaffold & implement

Once you have the code, open the PR as usual. If you are on Claude for Teams/Enterprise with Code Review enabled by your engineering lead, the tool activates automatically on every PR.

What happens behind the scenes (from the announcement):

- Multiple agents spin up in parallel, each examining the change from a different perspective (correctness, security, performance, maintainability, consistency with existing code).

- Agents cross-verify findings to reduce false positives.

- A final aggregator ranks issues, removes duplicates, and posts:

- One summary comment at the top of the PR

- Inline comments on specific lines

- Step-by-step reasoning for every finding

- Severity coloring (red/yellow/purple)

4. Validate & iterate

Treat Code Review comments like a senior engineer’s feedback.

Practical checklist when reviewing AI-generated review comments:

- Red issues → fix immediately (almost always correct)

- Yellow issues → read the reasoning. Fix if it makes sense, comment “acknowledged, will address in next PR” otherwise

- Purple issues → these often point at pre-existing problems in the codebase. Create a tech-debt ticket instead of fixing in this PR

- If you disagree with a finding, reply directly in the PR thread. This improves the model over time (Anthropic uses feedback)

After you address the comments, push a new commit. Code Review will re-analyze the updated PR.

5. Ship safely

Only merge once:

- All red issues are resolved

- You have manually tested the change

- Automated tests are green

- (Optional but recommended) You have run the deeper Claude Code Security scan on the changed files

Pro move: Add a GitHub status check that requires “Code Review: No red issues” before merge can occur. Most enterprise orgs will configure this policy.

Copy-paste starter templates

Template: Feature spec for Claude Code

Build a [component] that does X.

Context from existing codebase:

- We use [tech stack]

- Authentication is handled by [service]

- Data layer is [Prisma/ORM/etc]

Acceptance criteria:

- [list]

Edge cases to handle:

- [list]

After implementation, self-review for logical errors only.

Template: Post-implementation self-review prompt

Run a simulated Anthropic Code Review on this PR diff.

Focus exclusively on logic errors and security issues.

For each finding provide:

- Location (file + line)

- Severity (red/yellow/purple)

- One-sentence description of the bug

- Why it matters

- Suggested code change (exact diff if possible)

Pitfalls and guardrails

### What if I get too many false positives?

The tool is deliberately scoped to logic errors first, which keeps false-positive rate low. If you still see noise, ask your engineering lead to tune the custom checks. Avoid enabling style or formatting rules — that’s what your existing linter is for.

### What if the review is too slow?

Reviews are resource-intensive because of the multi-agent architecture. Complex changes can take longer. Start with smaller, focused PRs (single-responsibility changes). This also makes human review easier.

### What if I’m not on Enterprise yet?

The feature is currently in research preview for Teams and Enterprise only. You can approximate the workflow today by using the self-review prompt above plus Claude Code Security for deeper scans. Many solo builders are already doing this successfully.

### What if Code Review misses something?

It is not a replacement for human judgment or manual testing. Treat it as an extremely fast, consistent first-pass reviewer. You still own the code.

### How much will this cost?

Expect $15–$25 per review on average. High-velocity teams shipping 20–50 PRs per week should budget accordingly. The cost is worth it when you consider the engineering time saved and risk avoided.

What to do next — 7-day action checklist

- Write your next feature using the spec template above

- Generate code with Claude Code

- Run the self-review prompt before opening the PR

- Open PR and observe real Code Review comments (if you have access)

- Fix all red issues, comment on yellow ones

- Merge and measure cycle time vs previous features

- Document what kinds of issues Code Review caught for your specific codebase

The age of unchecked vibe coding is ending. The winning teams will be those who combine the raw generation power of Claude Code with the quality gate of Code Review. Start building that muscle now.

Sources

- Original announcement: https://techcrunch.com/2026/03/09/anthropic-launches-code-review-tool-to-check-flood-of-ai-generated-code/

- Anthropic product pages and quotes from Cat Wu, Head of Product

- Supporting coverage: The New Stack, VentureBeat, The Hacker News (Feb/Mar 2026)

- Claude Code Security launch context (deeper scanning companion product)

(Word count: 1,248)