Code Review for Claude Code: A Technical Deep Dive

Executive Summary

Anthropic has launched Code Review, a multi-agent AI system integrated into Claude Code that automatically analyzes GitHub pull requests generated by AI coding agents. The tool focuses exclusively on logical errors rather than style issues, employs parallel specialized agents followed by an aggregator/ranker, and surfaces findings with step-by-step reasoning, severity color-coding (red/yellow/purple), and suggested fixes. It is initially available in research preview for Claude for Teams and Claude for Enterprise customers. Average cost per review is estimated at $15–$25 (token-based). The architecture is explicitly designed to address the bottleneck created by the dramatic increase in AI-generated pull requests while maintaining a low false-positive rate on high-priority bugs. A lighter security analysis is included, with deeper scanning available via the separately launched Claude Code Security product.

Technical Architecture

The core innovation of Anthropic’s Code Review lies in its multi-agent orchestration rather than a single monolithic LLM call. When a pull request is opened (or when the feature is enabled by default for an engineering organization), the system triggers the following pipeline:

-

Dispatcher – Detects the PR and gathers context: the diff, related files, commit history, and any available repository-level documentation or coding standards.

-

Parallel Analysis Agents – Multiple specialized agents are spun up concurrently. Each agent is given a distinct “perspective” or analysis dimension. Although Anthropic has not published the exact number or specialization list, the description implies agents focused on:

- Algorithmic correctness and edge-case logic

- State management and data flow

- Concurrency and race conditions

- Resource management and leaks

- API contract compliance and integration points

- Performance and scalability regressions

These agents operate independently, reducing latency through parallelism while increasing coverage through diverse reasoning paths.

-

Cross-Verification Layer – Agents cross-check each other’s findings. This step is critical for reducing false positives, a known pain point with earlier automated code review tools. Only issues that survive cross-verification proceed.

-

Aggregator & Ranker Agent – A final LLM-based agent receives all validated findings, removes duplicates, consolidates overlapping issues, assigns severity (red = highest priority / must-fix, yellow = worth reviewing, purple = related to pre-existing or historical bugs), and produces:

- A single overview comment on the PR

- Inline annotations on specific lines

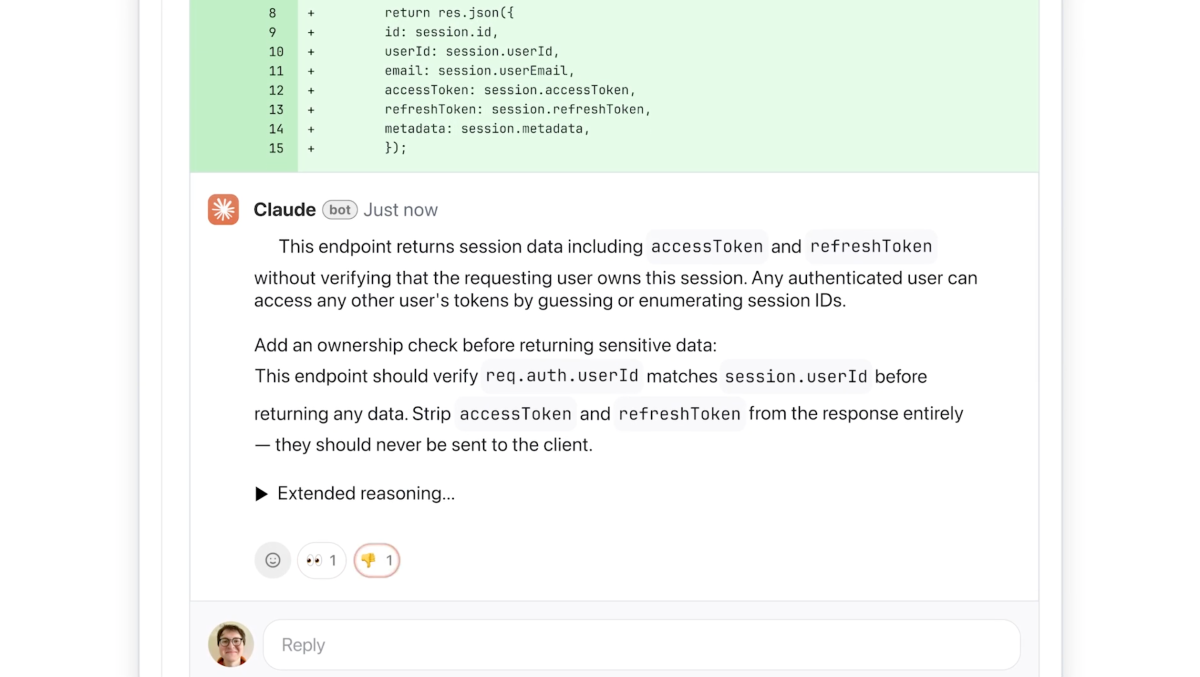

- Step-by-step natural-language explanations of the suspected bug, why it is problematic, and a concrete suggested fix

-

Customization Layer – Engineering leads can inject organization-specific rules or additional static-analysis-style checks that the agents consider during their analysis.

The system integrates directly with GitHub, posting comments as if it were a senior engineer. Because it is built on top of Claude’s latest models (presumably Claude 3.5/4 family or a specialized fine-tune), it inherits strong code understanding capabilities while the multi-agent design compensates for the limitations of single-pass generation.

A lighter security analysis is performed within the same workflow; for comprehensive vulnerability scanning and patch suggestions, Anthropic directs users to the companion product Claude Code Security, which was launched earlier in 2026 and performs deeper static + LLM-augmented scanning across entire codebases.

Performance Analysis

Public benchmark numbers have not yet been disclosed by Anthropic. The company instead relies on qualitative signals and internal usage data:

- Anthropic’s own developers now expect to see Code Review comments on PRs and reportedly “get a little nervous” when they are absent, indicating high internal trust.

- Emphasis is placed on logical error detection with a deliberately low false-positive rate. Cat Wu noted that developers are highly sensitive to noisy AI feedback; by restricting scope to bugs that should “almost definitely” be fixed, the tool maintains signal quality.

- Speed is highlighted as a key advantage: the parallel agent design allows reviews to complete “fast and efficiently” even on complex changes, scaling dynamically with PR size and codebase complexity.

- Cost metric provided: $15–$25 per average review. This is significantly higher than traditional static analysis tools but positioned as acceptable given the value of catching subtle logical bugs in AI-generated code at enterprise scale.

No head-to-head numbers against competitors (GitHub Copilot’s review features, Amazon CodeGuru, DeepSource, SonarQube with AI extensions, or other LLM-based reviewers such as those from CodiumAI or Bloop) are available in the announcement. However, the deliberate focus on logic over style and the multi-agent cross-verification represent a differentiated approach compared to single-pass “LLM as reviewer” solutions that have historically suffered from hallucinated issues and high noise.

Technical Implications

The launch signals a maturing “AI-native software engineering” stack. As “vibe coding” (natural-language-to-code via Claude Code or similar agents) dramatically increases code velocity, the traditional human review bottleneck becomes the limiting factor. Anthropic’s solution treats code review itself as an AI-first workflow, effectively inserting an always-on senior engineer into every PR.

For enterprises (Uber, Salesforce, Accenture, and others already heavy Claude Code users), this removes a major friction point and allows them to ship faster while theoretically reducing bug density. The ability to enable Code Review by default across an entire organization’s engineers suggests a shift toward policy-driven AI governance in the SDLC.

Ecosystem-wide, the product reinforces the trend of agentic coding platforms where multiple specialized agents collaborate. The same multi-agent pattern seen here is likely to appear in testing, documentation, refactoring, and migration agents in the near future.

The pricing model ($15–$25 per PR) also establishes a benchmark for the cost of high-quality AI code review. Organizations will need to evaluate whether the reduction in production bugs and engineering review time justifies the token spend, especially at high velocity.

Limitations and Trade-offs

- Resource Intensity: The multi-agent architecture is explicitly described as “resource-intensive.” This translates to higher latency on extremely large PRs and higher monetary cost.

- Scope Limitation: By focusing only on logical errors, the tool deliberately ignores style, readability, performance micro-optimizations, and certain classes of security issues (deeper analysis is delegated to Claude Code Security). Teams still require human review for architectural decisions, product fit, and subtle design concerns.

- Research Preview Status: Currently limited to Claude for Teams and Enterprise customers in research preview. Reliability, edge-case behavior, and integration maturity are not yet battle-tested at massive scale outside Anthropic’s own environment.

- False Positive / Negative Risk: While cross-verification aims to reduce false positives, any LLM-based system can still miss complex bugs or flag non-issues in novel code patterns.

- Token Cost Predictability: Because pricing is token-based and varies with code complexity, budgeting for high-velocity teams may be challenging without usage analytics.

Expert Perspective

From a senior ML engineering viewpoint, Anthropic’s Code Review is a pragmatic and well-targeted response to a real pain point the industry created for itself by accelerating code generation without equally accelerating verification. The multi-agent design with explicit cross-verification is technically sound; it mirrors successful patterns in agentic systems (e.g., debate-style verification, multi-path reasoning) that have shown improved reliability in other domains.

The decision to focus strictly on logical bugs is wise. It aligns the product with where current frontier models have the highest signal-to-noise ratio and where the business value is greatest—catching bugs that would otherwise reach production. The color-coded severity system and step-by-step explanations address the “black box” criticism often leveled at AI code tools, giving human reviewers a clear audit trail.

The $15–$25 price point will be the biggest adoption gate. At current Claude token economics, this implies each review consumes a non-trivial number of tokens, reflecting the cost of multiple model calls plus aggregation. As model efficiency improves and Anthropic potentially offers a distilled or specialized reviewer model, this cost should decrease.

Overall, this launch is significant because it moves AI from “code generator” to “full participant in the engineering feedback loop.” The next logical steps—agentic test generation that pairs with this reviewer, automated fix application, and continuous learning from accepted/rejected suggestions—would constitute a true autonomous coding colleague.

Technical FAQ

How does the multi-agent cross-verification work and what is the impact on false positives?

The system runs several agents in parallel with different analysis perspectives, then has them cross-verify each other’s proposed issues. Only findings that survive this consensus step reach the final aggregator. Anthropic claims this significantly lowers the false-positive rate compared with single-pass LLM reviewers, though exact reduction percentages are not yet published.

What is the difference between Code Review and Claude Code Security?

Code Review provides a lightweight security analysis focused on logical errors within the PR workflow. Claude Code Security is a separate, deeper vulnerability scanning product that scans entire codebases, identifies security issues traditional tools miss, and suggests targeted patches. The two are complementary.

Is Code Review limited to AI-generated code or does it work on human-written PRs as well?

The tool is technically agnostic to the origin of the code. It analyzes any pull request. However, it was designed in response to the surge in AI-generated PR volume and is marketed primarily to organizations using Claude Code heavily.

How is pricing calculated and can it be controlled?

Pricing is token-based and depends on the size and complexity of the change. Anthropic estimates $15–$25 for an average review. Engineering leads can likely configure which repositories, branches, or PR sizes trigger automatic review to manage cost.

References

- Anthropic product announcement via TechCrunch exclusive (March 9, 2026)

- Related coverage on Claude Code Security launch (February 2026)

- Technical commentary in The New Stack and VentureBeat